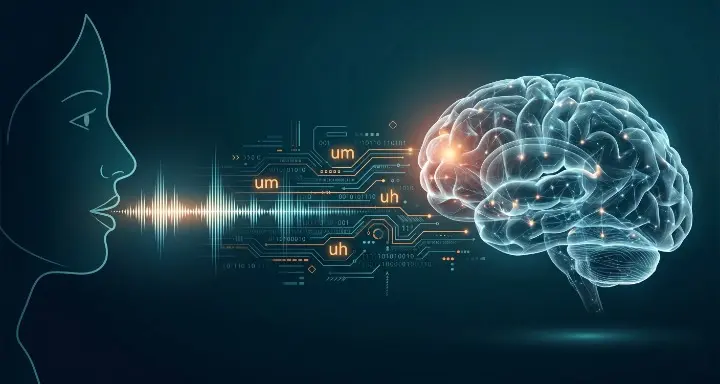

New research found that your daily speech patterns reveal your brain’s biological age years before memory problems begin

Every time you search for a word that won’t come, pause mid-sentence, or reach for an “um” to fill the gap, your brain is telling you something. Not about your vocabulary. Not about your nerves. About the biological state of your prefrontal cortex, your processing speed, and the protein buildup that may already be accumulating in regions of your brain you will not be able to feel for another decade.

Researchers have now established something that should change how we think about early detection of cognitive decline: your everyday speech is an accidental cognitive test, and you have been taking it every day of your life without knowing it.

The Experiment That Started With a Picture

The core research behind this finding comes from a study conducted by scientists at Baycrest, the University of Toronto, and York University, published in the Journal of Speech, Language, and Hearing Research. The design was deliberately simple. Participants ranging in age from 18 to 90 were shown detailed images and asked to describe what they saw in their own words. Nothing clinical. Nothing formal. Just natural conversation about a picture.

While they spoke, AI-driven software analyzed hundreds of features in their speech recordings. How fast were they talking? How long were their pauses? How often did they use filler words like “uh” and “um”? How frequently did they seem to search for a word before finding it? The same participants then completed a standard battery of tests measuring executive function: working memory, inhibition, mental flexibility, processing speed, and planning ability.

The correlation between what the AI heard in the speech and what the cognitive tests measured was direct and significant. Subtle features of speech timing, particularly pauses, fillers, and word-finding hesitations, were strongly linked to executive function scores across every age group in the study. The association held independent of age, sex, and education level, meaning the speech signal was reflecting something about the brain itself, not just demographic variables.

“The message is clear,” said Jed Meltzer, senior scientist at Baycrest’s Rotman Research Institute and senior author of the study. “Speech timing is more than just a matter of style. It is a sensitive indicator of brain health.”

What Executive Function Actually Controls

To understand why this matters, it helps to be precise about what executive function is. This isn’t memory in the narrow sense of remembering names or dates. Executive function is the set of cognitive processes that govern everything else: the ability to hold multiple pieces of information in mind simultaneously, to filter out irrelevant stimuli, to switch between mental tasks, to plan sequences of actions, and to inhibit impulsive responses in favor of deliberate ones.

These functions are anchored primarily in the prefrontal cortex and are among the first to show measurable decline in the early stages of neurodegeneration. They are also among the hardest to detect through standard clinical encounters, precisely because patients often compensate for early deficits in ways that are invisible to a doctor asking routine questions. A person can present as cognitively normal while their executive function is quietly degrading below the threshold of obvious observation.

What speech timing appears to capture is the processing speed that underpins executive function. Producing language in real time requires the brain to retrieve words from memory, organize them grammatically, sequence them in meaningful order, and execute the motor commands for speech, all simultaneously. When any part of this system slows, the slowdown shows up in the timing of the output before it shows up anywhere else.

The Brain Proteins Already Showing Up in Speech

The most striking evidence connecting speech patterns to early Alzheimer’s biology comes from a separate study conducted by researchers at Stanford University, Boston University, and the University of California San Francisco, published in Alzheimer’s & Dementia. The team analyzed data from 238 cognitively unimpaired adults drawn from the Framingham Heart Study, all of whom had completed cognitive testing and undergone PET brain scans measuring amyloid and tau protein levels.

The finding was stark. Speaking more slowly, and pausing longer and more frequently during memory recall tasks, was directly linked to increased tau protein accumulation in two specific brain regions: the medial temporal lobe and the early neocortical region. Both are known to be primary sites of early tau pathology in Alzheimer’s disease.

The detail that makes this finding particularly significant is what was not associated with tau burden: the memory score itself. Participants with elevated tau in their brains were not yet struggling to recall the correct answer. They were simply taking longer to retrieve and deliver it. The speech had slowed. The memory had not yet visibly failed. This is precisely the window that matters most for intervention, and speech timing appears to sit directly inside it.

Separately, patients with greater evidence of amyloid plaque in their brains were found to be 1.2 times more likely to show speech-related problems, adding another layer of biological connection between the proteins that define Alzheimer’s pathology and the way language unfolds in conversation.

What AI Is Now Able to Hear

A critical enabler of this entire line of research is the maturation of AI-based speech analysis. Human clinicians listening to a conversation cannot reliably detect the millisecond-level timing differences that distinguish healthy processing speed from declining processing speed. The differences are real but below conscious perception. AI can measure them at scale across hundreds of features simultaneously.

The Baycrest study used algorithms developed by Toronto-based company Winterlight Labs, whose technology analyzes speech for complexity of sentences, repetition of words, use of rare vocabulary, pause duration, speech rate, and fluency markers. These systems can extract a cognitive fingerprint from a 60-second audio sample, one that in this research predicted performance on formal cognitive tests with a reliability that formal cognitive tests in clinical settings often fail to achieve, precisely because clinical tests are one-time snapshots vulnerable to anxiety, practice effects, and measurement conditions.

Natural speech, by contrast, is something that cannot be faked over time. It doesn’t change based on how well someone slept before a doctor’s appointment. It reflects the baseline state of the cognitive hardware running beneath it.

The Signal That Hides in Plain Conversation

One of the most disorienting implications of this research is how visible the signal has been all along. The people closest to someone experiencing early cognitive decline, family members, close colleagues, longtime friends, often sense something has changed in how that person talks before any formal diagnosis is considered. A slight increase in pausing. More frequent “ums.” A tendency to lose the thread of a complex sentence midway through. These observations are typically dismissed as aging, stress, or distraction.

The data suggests these observations may be accurate neurological readings that nobody was equipped to interpret correctly. The brain that is accumulating tau proteins and losing executive function speed is broadcasting that information through every conversation it produces. The signal was always there. The tools to decode it reliably are arriving now.

Researchers are already exploring passive, continuous speech monitoring as a long-term cognitive tracking method, using voice data collected through everyday devices to establish individual baselines and flag meaningful deviations over time. The goal is not a single diagnostic test but a longitudinal record of how someone’s speech evolves, making subtle acceleration of decline detectable years before clinical symptoms emerge.

Why This Window Matters

The reason the preclinical window of neurodegeneration has become the central focus of Alzheimer’s research is that this is when intervention has the most biological leverage. By the time memory symptoms are obvious enough to prompt a clinical visit, the brain has typically lost years of neuronal tissue it cannot recover. The therapeutic window for disease-modifying interventions appears to be earlier, in the period when tau and amyloid are accumulating but cognitive performance on standard tests still looks normal.

Speech timing, as a continuous, passive, non-invasive signal of what is happening at the biological level, could provide access to that window without a blood draw, without a brain scan, and without a clinical appointment. The cognitive test has been running in the background of every conversation. We are finally learning to read the results.

Sources:

1. Primary speech and executive function study (Journal of Speech, Language, and Hearing Research, 2025) Wei, H.T., Kulzhabayeva, D., Erceg, L., et al. Natural Speech Analysis Can Reveal Individual Differences in Executive Function Across the Adult Lifespan. Journal of Speech, Language, and Hearing Research, 2025; 68(12): 5708. DOI: 10.1044/2025_JSLHR-24-00268 https://pubs.asha.org/doi/10.1044/2025_JSLHR-24-00268

2. Stanford tau protein and speech patterns (Alzheimer’s & Dementia, 2024) Young, C.B., Smith, V., Karjadi, C., et al. Speech patterns during memory recall relates to early tau burden across adulthood. Alzheimer’s & Dementia, 2024; 20(4): 2552–2563. DOI: 10.1002/alz.13731

https://alz-journals.onlinelibrary.wiley.com/doi/10.1002/alz.13731

3. Validated speech features and tau PET burden (Boston University/Stanford, December 2025) Li, Z., Young, C.B., Karjadi, C., et al. Automated Speech Features from Logical Memory Delayed Recall Test Relate to Early Tau PET Burden. Alzheimer’s & Dementia, 2025. DOI: 10.1002/alz70856_102373 https://www.ncbi.nlm.nih.gov/pmc/articles/PMC12739232/